- Blog

- Define finicky

- Free pokerist

- Illustrator 2022 mac torrent

- Health ipass competitors

- Bubble shooter 2 player online

- Break even analysis

- Big head basketball champions

- Checking an external hd using techtool pro 10

- Mozilla firefox for mac os x 10-7

- Julia ducournau

- Super monkey ball banana mania dlc

- Animatrice tva

- Trafficbot live in google analytics

- Keras sequential

- Battlefield 6 trailer audio leaked

The model is not trained for a number of iterations given by epochs, but merely until the epoch of index epochs is reached. We can use the InputLayer() class to explicitly define the input layer of a Keras sequential model. Note that in conjunction with initialepoch, epochs is to be understood as 'final epoch'.

I guess it is 3000x cards and their compatibility with Cuda and nn libraries problems. An epoch is an iteration over the entire x and y data provided. Cuda spec: nvcc: NVIDIA (R) Cuda compiler driverĬopyright (c) 2005-2019 NVIDIA Corporationīuilt on Wed_Oct_23_19:32:27_Pacific_Daylight_Time_2019Ĭuda compilation tools, release 10.2, V10.2.89 So in total we'll have an input layer and the output layer.

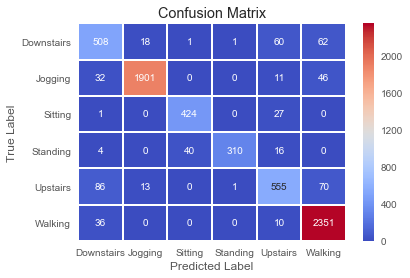

After training the model, the performance of the model was evaluated. In the next example, we are stacking three dense layers, and keras builds an implicit input layer with your data, using the inputshape parameter. The following example uses a simple Keras Sequential model with MNIST data to classify a given image of a digit between 0 to 9. My keras and tenserflow versions:Ģ.4.3 and '2.5.0-dev20210312' respectively. The simplest model in Keras is the sequential, which is built by stacking layers sequentially. Y_train,y_test = y*0.80)],y*0.80):]Īt last line the kernel dies. Were going to be be building a sequential model classify our images and the architecture is as follows: Convolutional input layer, 32 feature maps with a. Session = tf.compat.v1.Session(config=config)Īnd VRAM stops to push the limit. Then I took some precauses like config = tf.compat.v1.ConfigProto() Firstly it was GPU memory which reached all volume (no matter it is 3090 with 24 Gb). Just run a few lines with keras Sequential() crashes jupyter notebook kernel.